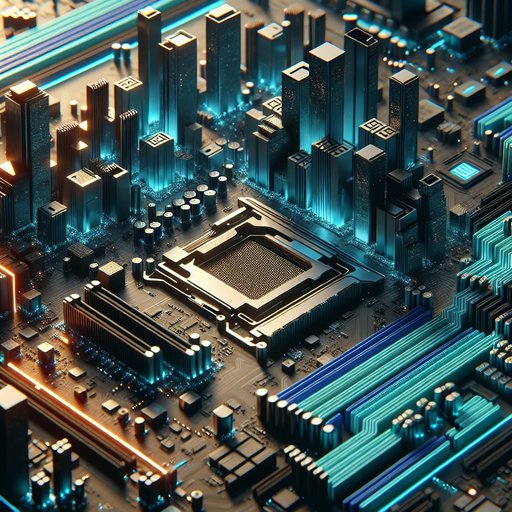

Modern motherboards have transformed from simple host platforms into dense, high-speed backplanes that quietly reconcile conflicting requirements: ever-faster I/O like PCIe 5.0, soaring transient power demands from CPUs and GPUs, and the need to integrate and interoperate with a sprawl of component standards. This evolution reflects decades of accumulated engineering discipline across signal integrity, power delivery, firmware, and mechanical design. Examining how boards reached today’s complexity explains why form factors look familiar while the underlying technology bears little resemblance to the ATX designs of the 1990s, and why incremental user-facing features mask sweeping architectural changes beneath the heatsinks and shrouds.

Motherboards sit at the center of computing’s evolution because they translate semiconductor roadmaps into usable systems. As processors integrated more functions and I/O speeds climbed, board designers assumed the role of high-speed interconnect engineers, not just component arrangers. The result is that a modern ATX board is effectively a controlled-impedance backplane with strict timing, loss, and crosstalk budgets. This shift determines what features appear on a platform, how many expansion devices it can sustain at full speed, and how long it can remain compatible as standards advance.

PCI Express exemplifies the challenge. Moving from PCIe 3.0’s 8 GT/s to 4.0’s 16 GT/s and now 5.0’s 32 GT/s halves the channel loss margin each step, forcing multi-gigahertz layout discipline. Designers use low-loss dielectrics, thicker copper, tight differential-pair matching, and careful via design to keep insertion loss within spec, and they deploy redrivers or retimers on long paths, particularly for front-panel M.2 and rear slots. Lane bifurcation and switch chips route limited CPU lanes among x16 graphics, x4 NVMe, and chipset uplinks, while equalization and reference clock distribution must remain stable across every topology the BIOS exposes.

Platform controllers, such as Intel’s PCH via DMI and AMD’s chipset links over PCIe, further partition bandwidth, making lane budgeting a central board-level design exercise. Power delivery advanced just as aggressively. Modern CPUs can swing from low idle to turbo currents in microseconds, so boards employ multi-phase VRMs with smart power stages, high-current inductors, and fast transient response tuning. ATX 3.0 and 3.1 brought new PSU expectations for GPU load transients and introduced the 16‑pin 12VHPWR, superseded in updated guidance by 12V‑2x6, while the motherboard’s PCIe slot still supplies up to 75 W under the CEM specification.

Load-line calibration, temperature-aware current balancing, and substantial heatsinking keep VRMs within limits during sustained boosts. On the memory side, DDR5 moved significant regulation onto the DIMM via an onboard PMIC, reshaping how boards route and filter power for memory channels. Memory signaling illustrates the integration trade-offs. Each DDR generation tightened timing and integrity windows, pushing boards toward topologies and trace lengths that favor fewer DIMMs per channel for higher speed.

DDR5 adds on-die ECC for array reliability and requires per-DIMM training managed by firmware, while the PMIC on the module reduces board-side regulation complexity but increases coordination between SPD data, BIOS memory training, and power sequencing. Manufacturers publish QVL lists after validating specific DIMM configurations because minute changes in trace length, via stubs, and socket placement can decide whether advertised speeds are attainable. Overclocking profiles like XMP and EXPO exist within these constraints, and server platforms trade peak frequency for more channels and capacity. The I/O mix has become a puzzle of overlapping standards.

USB4 and Thunderbolt require Type‑C retimers, USB‑PD controllers, firmware-managed alternate modes, and careful EMI control to coexist with Wi‑Fi and Bluetooth radios on the same board. DisplayPort Alt Mode shares pins with high-speed USB, while PCIe tunneling over USB4 adds routing decisions that must not starve native PCIe lanes reserved for NVMe. Networking ranges from integrated 2.5 GbE PHYs to 10 GbE controllers with their own SerDes and thermal needs, plus M.2 Key‑E sockets for Wi‑Fi modules that share antennas with Bluetooth. Audio codecs, front-panel USB 3.2 Gen2x2 headers, and legacy SATA all contend for board space and signal pathways without violating compliance margins or radiated emissions limits.

Mechanical and thermal design evolved alongside electronics to keep everything reliable. Higher VRM currents drove the move to large finned heatsinks, heat pipes, and sometimes backplates to spread heat away from power stages clustered around the CPU socket. PCIe 5.0 M.2 slots introduce tight bends and longer runs that often require retimers, and the resulting SSDs tend to ship with substantial heatsinks or even small fans, which boards must accommodate without colliding with GPU coolers. Form factors like ATX, microATX, and Mini‑ITX constrain layer counts, slot spacing, and keep‑out zones; server boards in SSI‑CEB or EEB formats provide more layers and traces for multi‑socket or high‑channel memory topologies.

The external look—RGB shrouds and decorative shields—conceals a functional purpose: controlled airflow and shielding for dense high-speed regions. Firmware became the orchestrator that makes heterogeneous standards behave coherently. UEFI replaced legacy BIOS, enabling complex initialization for PCIe equalization, DDR5 training, USB4 policy, and security features like Secure Boot and TPM 2.0 or firmware TPM. Platform microcode and vendor frameworks, such as AMD’s AGESA and Intel’s reference code, deliver continual improvements in memory compatibility, boost behavior, and device enumeration, extending the useful life of a board across CPU and peripheral refreshes.

Enthusiast options like PCIe lane bifurcation, Resizable BAR, and ASPM tuning expose trade-offs between performance, compatibility, and power. In servers, a dedicated BMC with standards like IPMI and Redfish adds remote management, telemetry, and field updates independent of the host CPU. Integration across so many domains forced new validation practices. Boards undergo compliance testing for PCIe 5.0 eye diagrams and receiver margins, USB4 link training and interoperability, and DDR5 timing at rated speeds, often iterating firmware to resolve corner cases discovered with retail devices.

Electromagnetic compatibility and coexistence testing ensure that high-speed lanes do not drown out wireless modules or sensitive audio paths, while thermal chambers and power transient tests verify behavior under worst-case boosts defined by modern CPU power management. Qualified vendor lists, public BIOS updates, and clear lane maps in manuals are the visible result of this work, helping users navigate trade-offs like disabling SATA ports when certain M.2 slots are populated. The modern motherboard is therefore the quiet backbone that reconciles bleeding-edge signaling with pragmatic backward compatibility. It carries PCIe 5.0 at 32 GT/s alongside USB4 tunnels, feeds bursty multi‑hundred‑watt loads without droop, and still offers headers for older peripherals.

As PCIe 6.0 with PAM4 signaling and forward error correction reaches mainstream platforms following initial server adoption, boards will further tighten their signal budgets and rely more on retimers and low‑loss materials. At the same time, continued CPU integration will shift some functions off the board, but the job remains the same: allocate finite lanes, power, and thermals so that disparate standards feel seamless to the user.